We Track Whether It Actually Worked

We don't just write content and move on. After it goes live, we check all 5 engines again to see if you're getting cited. If not, a new cycle starts automatically.

Post-Publish Monitoring

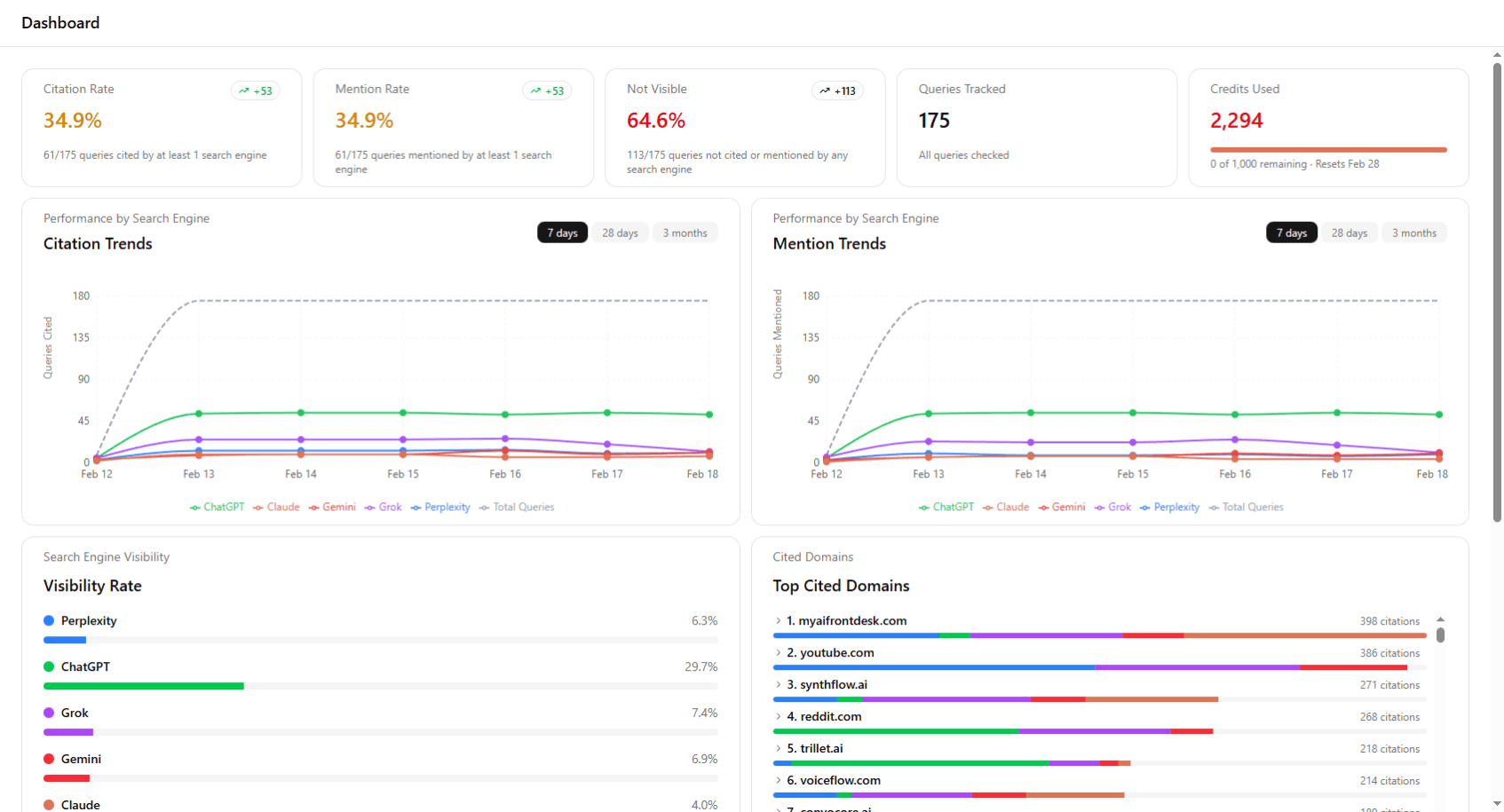

After content is published, most AEO tools move on. FogTrail keeps watching. After content goes live, we track citation performance across all 5 engines over time to see whether improvements materialize for each query you're targeting.

There's no instant “before/after” proof. AI citations don't work that way. Instead, you get ongoing visibility into how each query is trending. As we strictly target a query with optimizations, you can see whether citations improve across engines over days and weeks. That's real accountability.

Click to expand

Gets Smarter Over Time

Every cycle teaches the system more about what works in your market. If a comparison article got you cited by Perplexity but not Claude, that's useful. If adding fresh dates improved your Gemini results, that's useful too.

Over time, the system learns which content styles, structures, and approaches actually get results for your specific product and market. Each cycle is more targeted than the last.

Data flywheel screenshot

Click to expand

Continuous Protection

Getting cited once isn't enough. AI engines update roughly every 48 hours. Competitors publish, rankings shift, models retrain. Citations you earned last month can disappear this month.

Each engine behaves differently. Perplexity is volatile, Claude is rock-solid, Gemini degrades fastest without fresh content. FogTrail monitors at the cadence engines actually update, catches competitive displacement early, and triggers new optimization cycles automatically.

Continuous protection dashboard screenshot

Click to expand

FogTrail vs. Alternatives

| Capability | FogTrail | Most AEO Tools |

|---|---|---|

| After publishing | Keeps checking all 5 engines to see if citations improved | You check manually, or nobody does |

| How often | Every 48 hours, matching how often engines update | Weekly at best, often manual |

| Detail level | See results per engine, per query, with history over time | Just "cited" or "not cited" overall |

| When citations drop | Automatically starts a new cycle to fix it | You notice eventually, then figure it out yourself |

| Learning | Each cycle builds on what worked before | Starts from scratch every time |

| Stability | Tracks which citations hold and which are unreliable | Single snapshots, no trends |

Check frequency

Engines re-checked each cycle

Cycle tracked for results

New cycle if citations drop